| Edgeworks Product | 9 |

| Content Creation | 4 |

| SEM | 11 |

| Design | 11 |

| Instructional Design | 3 |

| Props | 218 |

| Alphabet Soup | 33 |

| Creative Collaboration | 1 |

| Website Ownership | 5 |

| Services | 5 |

| Client Website | 29 |

| Edgeworks Office | 18 |

| Marketing | 18 |

| AI Assisted Post | 1 |

| SEO | 10 |

| This Day in History | 1 |

| Branding | 1 |

| Book Review | 1 |

| Q&A | 3 |

This week in Friday Props we tip our hats to the genius minds that train artificial intelligence to generate photographic images from line drawings. We also acknowledge cats. Props for #edges2cats.

There are four authors of a paper titled Image-to-Image Translation with Conditional Adversarial Networks published at Berkeley AI Research (BAIR) Laboratory University of California, Berkeley.

The research in AI tackles one of the fundamental problems in image processing of "translating" an input image into an output image. From the paper's Abstract:

We investigate conditional adversarial networks as a general-purpose solution to image-to-image translation problems. These networks not only learn the mapping from input image to output image, but also learn a loss function to train this mapping. This makes it possible to apply the same generic approach to problems that traditionally would require very different loss formulations. We demonstrate that this approach is effective at synthesizing photos from label maps, reconstructing objects from edge maps, and colorizing images, among other tasks. As a community, we no longer hand-engineer our mapping functions, and this work suggests we can achieve reasonable results without hand-engineering our loss functions either

I'm not going to pretend I understand all the maths involved in the magic of image recognition and generation, but the end result is the very approachable project meant to demo Interactive Image Translation with a Tensorflow port of pix2pix. You don't have to understand what that means to get a chance to play with the demo that turns line drawings into cat photos.

Try the Image-to-Image "edges2cats" Demo Here

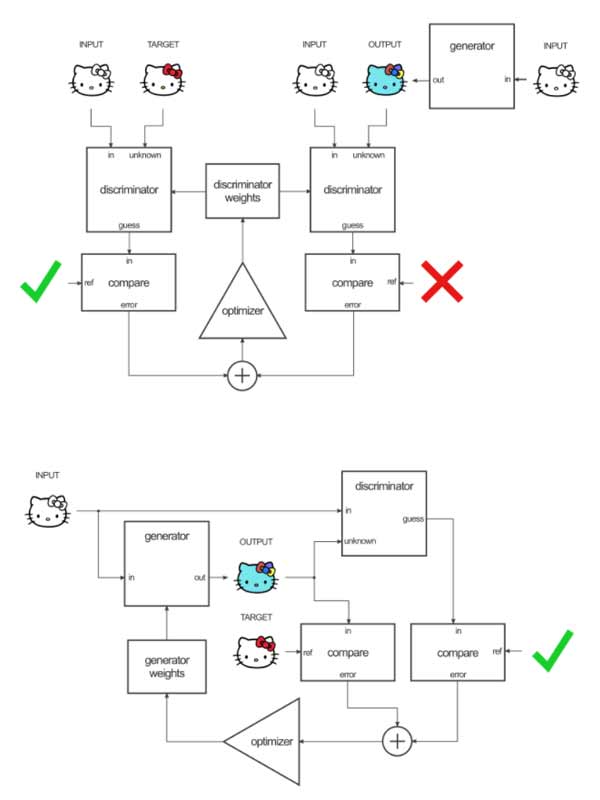

As I was researching this Friday Props I ran across the flowchart explaining how the different components are trained and of course I had to capture it. Those who know, know.

But that's not all. It turns out that Cats in general happen to be a VERY popular subject for papers in the realm of machine learning and image processing. Seriously ... take a look.

=======

Learn more:

Generative Adversarial Network

Paper Authors:

Opinion: